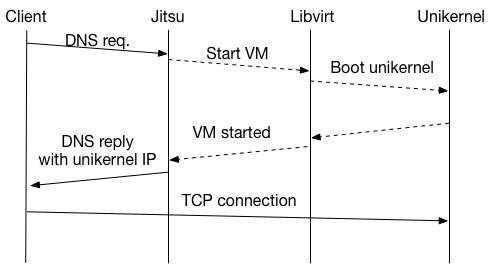

I recently wrote a DNS server that can boot unikernels on demand called Jitsu. The following diagram shows a simplified version of how Jitsu works. The client sends a DNS query to a DNS server (Jitsu). The DNS server starts a unikernel and sends a DNS response back to the client while the unikernel is booting. When the client receives the DNS response it opens a TCP connection to the unikernel, which now has completed booting and is ready to respond to the TCP connection.

The unikernels are built using MirageOS, a library operating system that allows applications to be compiled directly to small Xen VMs. These unikernels only include the operating system components the application needs - nothing else is added. This results in very small VMs with low resource requirements that boot quickly.

Now, what if I wanted to use Jitsu to boot my unikernel website when someone accesses it? My website is fairly low traffic, so this could potentially save me some resource use and hosting costs. Unfortunately, there are always a few requests per hour to some of the more popular sections, which likely would make my unikernel run most of the time. But what if I could split my unikernel into even smaller unikernels? What if I went to an extreme and had one unikernel per URL? Then I could only boot unikernels for the URLs that are being used and they would only need to know how to serve a single page. This could also have a number of benefits, such as the ability to spin up multiple unikernels for an extremely popular web page and use DNS to direct clients to the unikernel that is closest to them — while keeping the rest of the site inactive (let's ignore web crawlers for now). If I had dynamic sections of my website there could also be security benefits: Every dynamic page would run as a separate VM. An attack on a single page would not have to bring down the rest of the site nor reveal any data stored in other unikernels.

As an experiment, I wanted to see if I could run every URL hosted under my domain as a separate virtual machine. The goal was to map each URL to a domain name, which in turn was directed to a separate VM running a web server that hosts a single page. For example, the URL http://www.skjegstad.com/index.html becomes http://index.html.www.skjegstad.com/. Each domain is then mapped to a single IPv4 address on my local network.

Here is an abstract from the DNS zone file.

index.html.www.skjegstad.com IN A 192.168.56.10

images.profile.jpg.www.skjegstad.com IN A 192.168.56.11

ardrone-experiment-1.blog.images.ardrone-test.m4v.www.skjegstad.com IN A 192.168.56.12

ardrone-experiment-1.blog.images.camera-33.jpg.www.skjegstad.com IN A 192.168.56.13

ardrone-experiment-1.blog.images.ardrone-test.png.www.skjegstad.com IN A 192.168.56.14

[...]

You can see that even individual images have their own DNS entry, and will be served separately.

There is an OCaml web server library, called CoHTTP, which we can use with MirageOS. Using this library, unikernels can be written that host static web pages. The web content itself can either be compiled into the unikernel (as a data structure) or be stored directly on a block storage device. For this experiment, I use the built-in data structure to store a single file per unikernel.

For the unikernel code, I take the static_webserver example from mirage-skeleton. The code automatically creates a web server that hosts the files located in the ./htdocs subdirectory when the unikernel is built.

The demo code (available here) accepts a directory with static web pages as input. I will use my own website in the examples below, but any site with static web pages should work.

First, mirror (or copy) a static website:

$ wget -m www.skjegstad.com

Then run the create.py script to generate a unikernel with a web server for each file, which will serve that single file from memory. Note that the folder name and domain name must match - otherwise the script will become confused and not rewrite the URLs properly. My site has 49 pages and I've copied the entire output below. This step should only take a few seconds on a reasonably modern machine.

# Run create.py on the contents of the www.skjegstad.com folder. Folder name and domain name must match.

$ python create.py www.skjegstad.com

Indexing www.skjegstad.com...

Preparing unikernel for index.html.www.skjegstad.com/index.html in staging/85956378dc89cf3a2729

Preparing unikernel for images.profile.jpg.www.skjegstad.com/profile.jpg in staging/f7fb9c68d5c3701996b3

Preparing unikernel for ardrone-experiment-1.blog.images.ardrone-test.m4v.www.skjegstad.com/ardrone-test.m4v in staging/c286eccb0f59b299cca1

Preparing unikernel for ardrone-experiment-1.blog.images.camera-33.jpg.www.skjegstad.com/camera_33.jpg in staging/5bef80428ab04abb98c3

Preparing unikernel for ardrone-experiment-1.blog.images.ardrone-test.png.www.skjegstad.com/ardrone-test.png in staging/41d9ee421440ceec86a4

Preparing unikernel for ardrone-experiment-1.blog.images.ardrone1.jpg.www.skjegstad.com/ardrone1.jpg in staging/a9fdfed9d054ee4d48de

Preparing unikernel for ardrone-experiment-1.blog.images.camera-28.jpg.www.skjegstad.com/camera_28.jpg in staging/5ca0d87ae96d8648c36d

Preparing unikernel for ardrone-experiment-1.blog.images.screenshot1.png.www.skjegstad.com/screenshot1.png in staging/5b1ce89d1c806c4234f2

Preparing unikernel for mirage-dev.blog.images.ubuntu-in-vm-overview.jpg.www.skjegstad.com/ubuntu_in_vm_overview.jpg in staging/0d368ef42317b28f6243

Preparing unikernel for mirage-dev.blog.images.xen-in-vm-overview.jpg.www.skjegstad.com/xen_in_vm_overview.jpg in staging/2a4e519b0d91b8967b62

Preparing unikernel for uavexperiment.blog.images.MobileNode.png.www.skjegstad.com/MobileNode.png in staging/390a92f0488aa32f17e3

Preparing unikernel for uavexperiment.blog.images.image6.jpg.www.skjegstad.com/image6.jpg in staging/f9a90e263ac465d53558

Preparing unikernel for uavexperiment.blog.images.image1.jpg.www.skjegstad.com/image1.jpg in staging/fc21dfa295c33c6e3bd3

Preparing unikernel for uavexperiment.blog.images.image5.jpg.www.skjegstad.com/image5.jpg in staging/afc6634e807f1bddad22

Preparing unikernel for uavexperiment.blog.images.image4.jpg.www.skjegstad.com/image4.jpg in staging/1f7b97ef7d3a4a1ba0f4

Preparing unikernel for uavexperiment.blog.images.image3.jpg.www.skjegstad.com/image3.jpg in staging/4dedbc5bfcd11fe49a83

Preparing unikernel for uavexperiment.blog.images.image2.jpg.www.skjegstad.com/image2.jpg in staging/8f15fa23ae6563d2d890

Preparing unikernel for software.index.html.www.skjegstad.com/index.html in staging/855d32b8905249554f69

Preparing unikernel for macvim-and-funnel-pl.05.01.2012.blog.index.html.www.skjegstad.com/index.html in staging/cb72fc8606b734fd7322

Preparing unikernel for ardrone-test-flight-1.12.01.2012.blog.index.html.www.skjegstad.com/index.html in staging/570b6f207695db4d2898

Preparing unikernel for mirageos-xen-virtualbox.19.01.2015.blog.index.html.www.skjegstad.com/index.html in staging/9ada75a179f0d6c6cdff

Preparing unikernel for experimenting-with-distributed-chat.02.12.2011.blog.index.html.www.skjegstad.com/index.html in staging/0d0613c7f413609ab402

Preparing unikernel for a-stand-alone-java-bloom-filter.20.10.2011.blog.index.html.www.skjegstad.com/index.html in staging/c11e5af4b1a8ee667bf5

Preparing unikernel for virtualbox.categories.blog.index.html.www.skjegstad.com/index.html in staging/198f10ffb77757798200

Preparing unikernel for bloom-filters.categories.blog.index.html.www.skjegstad.com/index.html in staging/da3c099920c48f77b625

Preparing unikernel for mist.categories.blog.index.html.www.skjegstad.com/index.html in staging/ec590d332b74f3521787

Preparing unikernel for unikernel.categories.blog.index.html.www.skjegstad.com/index.html in staging/befa876942eb63d806d0

Preparing unikernel for experiments.categories.blog.index.html.www.skjegstad.com/index.html in staging/2d14e6010e04324f1fa9

Preparing unikernel for vim.categories.blog.index.html.www.skjegstad.com/index.html in staging/d09920f7a15c2f930686

Preparing unikernel for xen.categories.blog.index.html.www.skjegstad.com/index.html in staging/9bd2e2788f6309bc762c

Preparing unikernel for mirageos.categories.blog.index.html.www.skjegstad.com/index.html in staging/8b08718e16dddf30473f

Preparing unikernel for ardrone.categories.blog.index.html.www.skjegstad.com/index.html in staging/dfc57a0ce3fc7382a001

Preparing unikernel for java.categories.blog.index.html.www.skjegstad.com/index.html in staging/a13890b437ef10df36e4

Preparing unikernel for papers.skjegstad-mistsd-milcom2010.pdf.www.skjegstad.com/skjegstad_mistsd_milcom2010.pdf in staging/2b39a34888b769596fe0

Preparing unikernel for papers.milcom09skjegstad.pdf.www.skjegstad.com/milcom09skjegstad.pdf in staging/09854f35b4a6c9bafe01

Preparing unikernel for papers.milcom11-distributed-chat.pdf.www.skjegstad.com/milcom11_distributed_chat.pdf in staging/759daabffb7ac70f3c82

Preparing unikernel for publications.index.html.www.skjegstad.com/index.html in staging/2434cc8c7a5f966b2bc9

Preparing unikernel for about.index.html.www.skjegstad.com/index.html in staging/6ff5ef4c2d9236d7a398

Preparing unikernel for js.theme.modernizr-2.0.js.www.skjegstad.com/modernizr-2.0.js in staging/1b8ff82e33ce3d777d9e

Preparing unikernel for js.theme.octopress.js.www.skjegstad.com/octopress.js in staging/2e5e43546763b649cd3b

Preparing unikernel for js.theme.ender.js.www.skjegstad.com/ender.js in staging/edf18f0bba513af40346

Preparing unikernel for js.theme.github.js.www.skjegstad.com/github.js in staging/a577cb04bac38034dc95

Preparing unikernel for images.theme.noise.png.www.skjegstad.com/noise.png?1395516324 in staging/e9d12885cf941b2f149c

Preparing unikernel for images.theme.search.png.www.skjegstad.com/search.png?1395516324 in staging/c23144b5f096a13a71bb

Preparing unikernel for images.theme.code-bg.png.www.skjegstad.com/code_bg.png?1395516324 in staging/19e1de022f4a565bc1e2

Preparing unikernel for images.theme.line-tile.png.www.skjegstad.com/line-tile.png?1395516324 in staging/29ff05b603a5d0cdebdc

Preparing unikernel for images.theme.email.png.www.skjegstad.com/email.png?1395516324 in staging/4d4e8d94047cf9c45080

Preparing unikernel for images.theme.rss.png.www.skjegstad.com/rss.png?1395516324 in staging/433115bed52cbea861a0

Preparing unikernel for css.theme.main.css.www.skjegstad.com/main.css in staging/1bacfdf7b71099057d8c

Zone file generated in www.skjegstad.com.zone.

Makefile generated. Type 'make' to build, 'make run' to run and 'make destroy' to stop VMs. Start local DNS server with 'make dns'

When the script completes, a unikernel has been created in ./staging for each file in the www.skjegstad.com directory. To compile the unikernels using the mirage-tool, run make. Note that this requires a working MirageOS development environment and I have written a guide for setting this up here. This step will take some time, depending on how many pages you have. In my case, it was around 4 minutes in Virtualbox on my laptop.

When all the unikernels have been successfully built, make run will start each of them using Xen's xl tool. Use sudo xl list to see the running unikernels. This is the xl output for 49 MirageOS virtual machines hosting www.skjegstad.com on my local network:

$ sudo xl list

Name ID Mem VCPUsStateTime(s)

Domain-0 0 292 1 r----- 2156.1

85956378dc89cf3a2729 39 32 1 -b---- 0.1

f7fb9c68d5c3701996b3 40 32 1 -b---- 0.1

c286eccb0f59b299cca1 41 32 1 -b---- 0.1

5bef80428ab04abb98c3 42 32 1 -b---- 0.1

41d9ee421440ceec86a4 43 32 1 -b---- 0.1

a9fdfed9d054ee4d48de 44 32 1 -b---- 0.1

5ca0d87ae96d8648c36d 45 32 1 -b---- 0.1

5b1ce89d1c806c4234f2 46 32 1 -b---- 0.1

0d368ef42317b28f6243 47 32 1 -b---- 0.1

2a4e519b0d91b8967b62 48 32 1 -b---- 0.1

390a92f0488aa32f17e3 49 32 1 -b---- 0.1

f9a90e263ac465d53558 50 32 1 -b---- 0.1

fc21dfa295c33c6e3bd3 51 32 1 -b---- 0.1

afc6634e807f1bddad22 52 32 1 -b---- 0.1

1f7b97ef7d3a4a1ba0f4 53 32 1 -b---- 0.1

4dedbc5bfcd11fe49a83 54 32 1 -b---- 0.1

8f15fa23ae6563d2d890 55 32 1 -b---- 0.1

855d32b8905249554f69 56 32 1 -b---- 0.1

cb72fc8606b734fd7322 57 32 1 -b---- 0.1

570b6f207695db4d2898 58 32 1 -b---- 0.1

9ada75a179f0d6c6cdff 59 32 1 -b---- 0.1

0d0613c7f413609ab402 60 32 1 -b---- 0.1

c11e5af4b1a8ee667bf5 61 32 1 -b---- 0.1

198f10ffb77757798200 62 32 1 -b---- 0.1

da3c099920c48f77b625 63 32 1 -b---- 0.1

ec590d332b74f3521787 64 32 1 -b---- 0.1

befa876942eb63d806d0 65 32 1 -b---- 0.1

2d14e6010e04324f1fa9 66 32 1 -b---- 0.1

d09920f7a15c2f930686 67 32 1 -b---- 0.1

9bd2e2788f6309bc762c 68 32 1 -b---- 0.1

8b08718e16dddf30473f 69 32 1 -b---- 0.1

dfc57a0ce3fc7382a001 70 32 1 -b---- 0.1

a13890b437ef10df36e4 71 32 1 -b---- 0.1

2b39a34888b769596fe0 72 32 1 -b---- 0.1

09854f35b4a6c9bafe01 73 32 1 -b---- 0.0

759daabffb7ac70f3c82 74 32 1 -b---- 0.0

2434cc8c7a5f966b2bc9 75 32 1 -b---- 0.0

6ff5ef4c2d9236d7a398 76 32 1 -b---- 0.0

1b8ff82e33ce3d777d9e 77 32 1 -b---- 0.0

2e5e43546763b649cd3b 78 32 1 -b---- 0.0

edf18f0bba513af40346 79 32 1 -b---- 0.0

a577cb04bac38034dc95 80 32 1 -b---- 0.0

e9d12885cf941b2f149c 81 32 1 -b---- 0.0

c23144b5f096a13a71bb 82 32 1 -b---- 0.0

19e1de022f4a565bc1e2 83 32 1 -b---- 0.0

29ff05b603a5d0cdebdc 84 32 1 -b---- 0.0

4d4e8d94047cf9c45080 85 32 1 -b---- 0.0

433115bed52cbea861a0 86 32 1 -b---- 0.0

1bacfdf7b71099057d8c 87 32 1 -b---- 0.0

The create.py-script also generated a DNS zone file that maps the domain names to IP addresses. This is the generated zone file for www.skjegstad.com:

$ cat www.skjegstad.com.zone

@ IN SOA ns.www.skjegstad.com. postmaster.www.skjegstad.com. (1 600 600 600 60)

www.skjegstad.com. IN CNAME index.html.www.skjegstad.com.

index.html.www.skjegstad.com IN A 192.168.56.10

images.profile.jpg.www.skjegstad.com IN A 192.168.56.11

ardrone-experiment-1.blog.images.ardrone-test.m4v.www.skjegstad.com IN A 192.168.56.12

ardrone-experiment-1.blog.images.camera-33.jpg.www.skjegstad.com IN A 192.168.56.13

ardrone-experiment-1.blog.images.ardrone-test.png.www.skjegstad.com IN A 192.168.56.14

ardrone-experiment-1.blog.images.ardrone1.jpg.www.skjegstad.com IN A 192.168.56.15

ardrone-experiment-1.blog.images.camera-28.jpg.www.skjegstad.com IN A 192.168.56.16

ardrone-experiment-1.blog.images.screenshot1.png.www.skjegstad.com IN A 192.168.56.17

mirage-dev.blog.images.ubuntu-in-vm-overview.jpg.www.skjegstad.com IN A 192.168.56.18

mirage-dev.blog.images.xen-in-vm-overview.jpg.www.skjegstad.com IN A 192.168.56.19

uavexperiment.blog.images.MobileNode.png.www.skjegstad.com IN A 192.168.56.20

uavexperiment.blog.images.image6.jpg.www.skjegstad.com IN A 192.168.56.21

uavexperiment.blog.images.image1.jpg.www.skjegstad.com IN A 192.168.56.22

uavexperiment.blog.images.image5.jpg.www.skjegstad.com IN A 192.168.56.23

uavexperiment.blog.images.image4.jpg.www.skjegstad.com IN A 192.168.56.24

uavexperiment.blog.images.image3.jpg.www.skjegstad.com IN A 192.168.56.25

uavexperiment.blog.images.image2.jpg.www.skjegstad.com IN A 192.168.56.26

software.index.html.www.skjegstad.com IN A 192.168.56.27

macvim-and-funnel-pl.05.01.2012.blog.index.html.www.skjegstad.com IN A 192.168.56.28

ardrone-test-flight-1.12.01.2012.blog.index.html.www.skjegstad.com IN A 192.168.56.29

mirageos-xen-virtualbox.19.01.2015.blog.index.html.www.skjegstad.com IN A 192.168.56.30

experimenting-with-distributed-chat.02.12.2011.blog.index.html.www.skjegstad.com IN A 192.168.56.31

a-stand-alone-java-bloom-filter.20.10.2011.blog.index.html.www.skjegstad.com IN A 192.168.56.32

virtualbox.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.33

bloom-filters.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.34

mist.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.35

unikernel.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.36

experiments.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.37

vim.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.38

xen.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.39

mirageos.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.40

ardrone.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.41

java.categories.blog.index.html.www.skjegstad.com IN A 192.168.56.42

papers.skjegstad-mistsd-milcom2010.pdf.www.skjegstad.com IN A 192.168.56.43

papers.milcom09skjegstad.pdf.www.skjegstad.com IN A 192.168.56.44

papers.milcom11-distributed-chat.pdf.www.skjegstad.com IN A 192.168.56.45

publications.index.html.www.skjegstad.com IN A 192.168.56.46

about.index.html.www.skjegstad.com IN A 192.168.56.47

js.theme.modernizr-2.0.js.www.skjegstad.com IN A 192.168.56.48

js.theme.octopress.js.www.skjegstad.com IN A 192.168.56.49

js.theme.ender.js.www.skjegstad.com IN A 192.168.56.50

js.theme.github.js.www.skjegstad.com IN A 192.168.56.51

images.theme.noise.png.www.skjegstad.com IN A 192.168.56.52

images.theme.search.png.www.skjegstad.com IN A 192.168.56.53

images.theme.code-bg.png.www.skjegstad.com IN A 192.168.56.54

images.theme.line-tile.png.www.skjegstad.com IN A 192.168.56.55

images.theme.email.png.www.skjegstad.com IN A 192.168.56.56

images.theme.rss.png.www.skjegstad.com IN A 192.168.56.57

css.theme.main.css.www.skjegstad.com IN A 192.168.56.58

The zone file can be loaded in your favorite DNS server (e.g. bind or unbound). It is also relatively easy to build a quick DNS server in OCaml based on ocaml-dns:

open Dns

open Lwt

let () =

Lwt_main.run (

let address = "0.0.0.0" in

let port = 53 in

Dns_server_unix.serve_with_zonefile ~address ~port "www.skjegstad.com.zone"

)

I have written an extended version with support for forwarding unknown DNS requests to another DNS server. It is available for download here and is included in the demo code. In fact, you could also run the DNS server as a unikernel, but I will leave that as an exercise for the reader (this blog post by Heidi Howard is a good starting point with lots of examples).

Point your DNS server to the IP of the DNS server that hosts the zone file to start browsing the static website. Each static page should map to a separate domain name which is run by a single MirageOS unikernel!

Conclusion / discussion

Currently this is just a quick experiment and is not very practical. I would still need a unikernel or Linux VM to run the DNS server and I have not been able to find a hosting provider that has a minimum configuration which would be appropriate for large or small unikernels (e.g. 8MB vs 64MB RAM). Each VM/URL would also require a unique public IP address unless they are placed behind another service (NAT) that can route the HTTP requests to an internal network.

If you followed along, then you would also notice that the compiled unikernels in ./staging take up around 175MB (49 unikernels). When running, they collectively consume around 1.6GB of memory. However, it was a lot easier to try this experiment using unikernels than it would have been with a traditional OS stack.

A more practical architecture would perhaps be to run a website and DNS server from a single host initially. As each web page is mapped to a URL, the DNS server could then be configured to automatically boot new unikernels only if a subdomain experienced high load. It could, for example, deploy unikernels automatically to Amazon EC2 that serve a single file and start returning its IP address in DNS queries in periods with high load. Once the website has been 'disaggregated' this way, it would even be possible to start VMs in availability zones that are geographically close to the where the spike in traffic is originating! This could all be configured to happen automatically, with DNS entries being updated as new pages are added and with unikernels being deployed to places where they are in demand.

(Thanks to Amir Chaudry for commenting on a draft of this post)